I spent this New Years Eve in a pub with an old friend. We’d covered the normal topics of conversation – the love-lives of mutual friends, why our nostril hair is sprouting, how everything was better when we were young. Conversation shifted to our work, and I started rambling about my life as an M&E consultant.

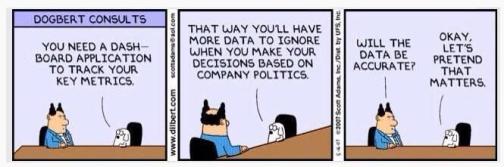

“It’s all so pointless,” I said. (I’d had several pints at this point.) “I spend my time helping charities to collect high quality monitoring data – and then they just ignore it.”

“Why is that?” my friend asked. “That seems completely stupid.”

And I was stumped for an answer. Development charities are full of bright, enthusiastic, often very nice people, doing amazing things with limited resources. Why are they so slow to use monitoring data that could improve the effectiveness of their work? I spluttered a bit, then changed the topic and we spent the rest of the evening trying to balance a pint glass on a spoon. But it is an important question, and I’ve spent some time since then thinking about why we are so slow to learn from monitoring data. Here is a short, non-exclusive list of reasons that I’ve encountered through my time in the sector.

1) Monitoring frameworks are useless. One reason why many programmes don’t use monitoring data effectively is that the monitoring data they collect is rubbish. Too many monitoring frameworks are geared towards measuring a small number of quantitative indicators, sometimes of little relevance to the programme. If the monitoring framework isn’t encouraging programmes to gather and think about a wide range of relevant data, it’s no surprise that they don’t learn much from it.

2) Pressure to spend. Incentives are generally set from the top, whether this is the donors, board of trustees, or senior management of an organisation. If these pressures are primarily to spend money or conduct activities on time, it is no surprise that there is little interest in learning about whether these activities were successful or not. I worked in one humanitarian organisation which was spending money too slowly – a terrible sin in the aid sector. I remember the team leader strutting up and down in a meeting, waving a folder of paper above his head. “You need to spend, people!” he yelled, like a bearded Gordon Gekko. “Get out there and move some money!” Not exactly calculated to inspire thoughtful, reflective practice.

3) Short projects. Even without the clear management dysfunction described above, short term projects often leave little time for staff to really learn from monitoring information. Imagine that you’re implementing a three year project. You probably spend at least a year setting up, finding teams, and running through an initial cycle of activities. If you find that this initial cycle of activities wasn’t particularly effective – perhaps you used the wrong partner, worked in the wrong place, or were targeting the wrong problem – this doesn’t leave you much time to fix it. Revising the programme could take another six months, which would mean that you’re half way through the project without having achieved anything. In this situation, most programmes prefer to ignore any evidence that things are going badly, and plough on regardless.

4) Complex change processes. Sounds obvious, but learning from monitoring data requires some kind of process to allow organisations to feed this learning back into performance. This learning loop is often dysfunctional, and so revising plans and strategies is so much work that it’s easier not to bother. A prime example of this is DFID’s use of the logframe, a document which sets much of the strategic direction for their programmes. Although DFID guidance allows for – indeed, theoretically encourages – revision to the logframe, in practice it’s a massive pain to revise. By the time any changes have gone up through the organisation, been reviewed, argued over, and reviewed again by more senior people who give completely different advice, it just isn’t worth the bother. So although staff on the ground may be learning from monitoring data, there is no real process for this to feed back into the overall programme strategy. (Although this may be changing.)

5) Not having a clue how to make things better. Finally, one of the key reasons development programmes don’t improve based on monitoring data is that they genuinely don’t have a clue how. Development is a tricky business, and programmes typically aim to do ridiculously ambitious things. Developing health systems, promoting economic development, and providing decent educations are all issues that developed countries have wrestled with for centuries – and they don’t do a great job of them. So if your monitoring data shows that the health system isn’t strengthened at all, then it could well be that the programme just hasn’t a clue what to do, and so continues doing the same thing that they know isn’t working.

Ultimately, good use of monitoring data comes down to strong leadership. Senior management needs to understand the importance of monitoring, and put resources and time into it accordingly. They need to resist organisational incentives to spend money, or to run projects badly, and actually care about what they’re doing. And they need to have a clear idea of what they can do, or inspire others to get a clear idea, and not be afraid to close down projects when necessary.

See a great response from Elina Sarkisova: “Could Paying for Results (finally) Help us Learn?” (and if you’re a real aidleap fan, then scroll down to the bottom to see our our response to her response…)

And also see our previous blog in this series: What have indicators ever done for us?

Organisations are getting more and more occupied with growth, scale and mergers – huge organisational changes that tie an enourmous amount of financial and human ressources in something that is internal – getting the organisations running, rather than getting the projects on the ground running.

What examples are you thinking of? It’s probably true that an obsession with growth can be a distraction – though it can also create new opportunities for learning and innovation, I think.

This is really interesting. Do you have any examples of donors where changes are easier? From what you’ve seen, are NGOs that have lots of lovely flexible core funding any better at using their monitoring info?

very interesting! my job is programme learning advisor.. I’ve also had those huge moments of doubts when I think that we just will never learn, but they’re surpassed by the global enthusiasm and energy of my colleagues – we want to learn, we just don’t know how. (answers on a postcard please…) I’m interested to see that in your view, good monitoring leads to good learning. Have you seen good examples of that? Also, sure the L in MEAL stands for learning, but that’s only one of the way – are you also looking into other ways with which we can capture and apply learning? Thanks

Thanks Audrey and Kate for your comments. I think good monitoring is a prerequisite for good learning, but certainly enough! I have seen good examples of organisations really monitoring carefully and making decisions based on it. I don’t think core funding necessarily makes a difference – it can remove one limitation but brings other pressures (like the need to satisfy a fundraising dept). One useful principle is perhaps to start by scrapping m&e departments… Trying to make it part of everyone’s jobs rather than a specialised function.

What do you mean by other ways to capture and apply learning? Can you give an example?

Reblogged this on Conversations I Wish I Had.

To paraphrase Hemingway: Strategize drunk, monitor sober

Reblogged this on Monitoring & Evaluation & Learning.

Ah, scrapping M&E departments. I just don’t know what to think about that, having worked in one for the past year. On the one hand, yes, having M&E in a silo definitely encourages a degree of complacency among programme staff about their own commitment to learning. On the other, scrap it entirely and it could go the way of gender mainstreaming – putting yet another set of expectations on people’s already full plates (especially in humanitarian work). Once it’s everybody’s responsibility, it becomes nobody’s.

From my (very) limited experience the best results I’ve seen have come from having both a strong M&E department AND committed programme managers who are fully bought-in to the value M&E can add to their programmes. Which I suppose depends, yet again, on interpersonal relationships as much as anything else.

Just two quick comments FWIW. First on the siloing of M&E. I head a small M&E team in my organization, but we are constantly trying to take a “wholesale” approach. And have the “retail” work done by the programs within their projects. It’s the best approach I think for our org. Second, regarding using monitoring data. Don’t underestimate the sheer inertia that exists in decisionmaking processes. Organizations get into habits and rhythms that are hard to disrupt with new information. -Andy (@alb202)

Monitoring data or any kind of change, the question is how do we do battle with the inevitable tendency of organizations to perpetuate established procedures and modes, even if they are contradictory to our goals? How do we prevent (or reverse) bureaucratic inertia? I’m still learning on this one…

The biggest problem I see is the Project Design and/or Contracts people are not prepared or able to keep up with M&E. They have often over-designed a project without giving space for testing their hypothesis or accounting for outside factors. This rigidity means that any change to PD means a modification, rather than working within a wider scope, and contract officers then get involved.

Pingback: Saturday Morning Reading #31 | A Pett Project

Pingback: Could paying for results (finally) help us learn? |

Great article! I would also add:

1. Lack of Incentives from the Donor for Course Correction: I think implementers get weary of sharing data that indicates something isn’t going well and needs to be changed. It could make them look bad, and depending on their donor counterpart, sharing this information may not be encouraged. It may result in modifications, may cause political problems within the donor agency, etc. So is the donor really incentivizing course correction? If not, this is a major barrier and why staff may not feel like it is worth trying even when things can be pushed through internally. Related to this, I think in some cases implementers get so used to being so ‘responsive’ to the donor that they only really do anything new when requested to do so. So if the donor isn’t talking about course correction or really asking about M&E data, why bother doing something about?

2. Structural divide between M&E/Learning and Program Implementation: When M&E is perceived to be this separate function that only gives the implementing team feedback (typically about what needs to be fixed, not what is working), then a very real divide emerges between M&E and program implementation teams. Almost naturally, program implementation teams resist the feedback because of all the other reasons laid out in the article. But, when program implementation teams generate and realize the learning on their own, they typically do something about it because it’s coming from them, not from ‘outsiders.’ In my experience a few things can help change this dynamic – 1. M&E staff focusing on what is working well and making sure even more of that feedback is provided to the program team. 2. Management reducing barriers and fostering an environment where M&E is an integral part of implementation, asking about it, and showing it matters for effective implementation. 3. Basic M&E training for staff so they can uncover and internalize learning on their own. 4. M&E staff facilitating learning among program implementation teams to help them come to their own realizations after hearing beneficiary feedback or seeing the data (meaning, stop short of making the conclusion and issuing a verdict; better to present data and facilitate discussions about it and let the implementation team make some of those tough conclusions and ways to course correct).

3. The last is laziness. It’s sad to say, but that’s just a big part of it. It’s hard to change and people have other things to keep themselves busy.