This is the third in our series on DFID’s monitoring systems. Click here to read our previous blog, which discussed our analysis of over 600 Annual Reviews from DFID.

I’ve previously mocked DFID’s Annual Reviews on this blog. In the spirit of constructive criticism that (on a good day) pervades Aid Leap, it’s now time to say something more detailed about why they don’t work, and how they might work better.

Annual Reviews are DFID’s primary way of monitoring a programme. They generate huge amounts of paperwork – with an estimated twenty million words available online – alongside a score ranging from ‘C’ to ‘A++’, with a median of ‘A’. If a programme receives two Bs or a single C, it will be put under special measures. If no improvement is found, it can be shut down.

This score is based on the programme progress against the logical framework, which defines outputs for the programme to deliver. Each of these outputs is assessed through pre-defined indicators and targets. If the programme exceeds targets, it is given an A+ or an A++. If it meets them, it gets an A, and if it falls short, it gets a B or C.

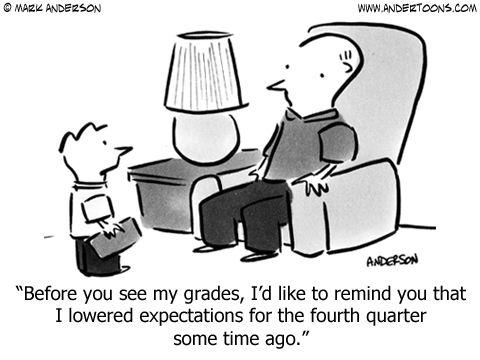

It’s a nice idea. The problem is that output level targets are typically set by the implementer during the course of the programme. This means that target-setting quickly becomes a game. Unwary implementers who set ambitious targets will soon find themselves punished at the Annual Review. The canny implementer will try to set targets at the lowest possible level that DFID will accept. Over-cynical, perhaps; but this single score can make or break a career (and in some cases, trigger payment to an implementer), so there is every incentive to be careful about it.

A low Annual Review score, consequently, is ambiguous. It could mean that the implementer was bad at setting targets, or insufficiently aware of the game they are playing. Maybe a consultant during the inception phase set unrealistic targets, confident in the knowledge that they would not be staying on to meet them. Maybe external circumstances changed and rendered the initially plausible targets unrealistic. Or maybe the programme design changed, and so the initial targets were irrelevant. Of course, the programme might also have been badly implemented.

Moreover, the score reflects only outputs – not outcomes. A typical review has just a single page dedicated to outcomes, and fifteen to twenty pages describing progress against outputs. It makes no sense to incentivise the implementer to focus on outputs at the expense of outcomes by including only the former in the scope of the annual review. The best logframes that I’ve seen implicitly recognise this problem by putting outcomes at the output level– but this then means that the implementers have even more incentive to set these targets at the lowest possible level.

I don’t want to throw any babies out with the bathwater. I think the basic idea of a (reasonably) independent annual review is great, and scoring is a necessary evil to ensure that reviews get taken seriously by implementers. As I’ve previously argued, DFID deserve recognition for the transparency of the process. I suggest the following improvements to make them a more useful tool:

- All targets should be set by an independent entity, and revised on an annual basis. It simply doesn’t make sense to have implementers set targets that they are then held accountable for. They should be set by a specific department within DFID, and revised as appropriate in collaboration with the implementer.

- Scoring should incorporate outcome level targets, where appropriate. It’s not always appropriate. But in many programmes, you can look at outcome level changes on an ongoing basis. For example, water and sanitation programmes shouldn’t just be scored on whether enough information has been delivered; but on whether anyone is using this information and changing their behaviour.

- For complex programmes, look at process rather than outputs. There’s a lot of talk about ‘complex programmes’, where it’s challenging to predict in advance what the outputs should be. This problem is partially addressed by allowing these targets to be revised on an annual basis. In some cases, moreover, there is an argument for more process targets. These look not just at what the organisation is achieving, but how it is doing it. A governance programme, for example, might be rated on the quality of their research, or the strength of their relationships with key government partners.

- Group programmes together when setting targets and assessing progress. Setting targets and assessing progress for a single programme is really difficult. It’s always possible to come up with a bundle of excuses for any given failure to meet targets – and tough for an external reviewer to know how seriously to take these excuses. The only solution here is to group programmes together, and assess similar types of programmes on similar targets. Of course, there are always contextual differences. But if you are looking at two similar health programmes, even if they are in different countries, at least you have some basis for comparison.

- Clearly show the change in targets over time. At the moment, logframes are re-uploaded on an annual basis, making it difficult to see how targets have changed. If there was a clear record of changes in logframes and targets, it would be much easier to judge the progress of programmes. I’m not sure whether this should be public – it might not pass the Daily Mail test – but DFID should certainly be keeping a clear internal log.